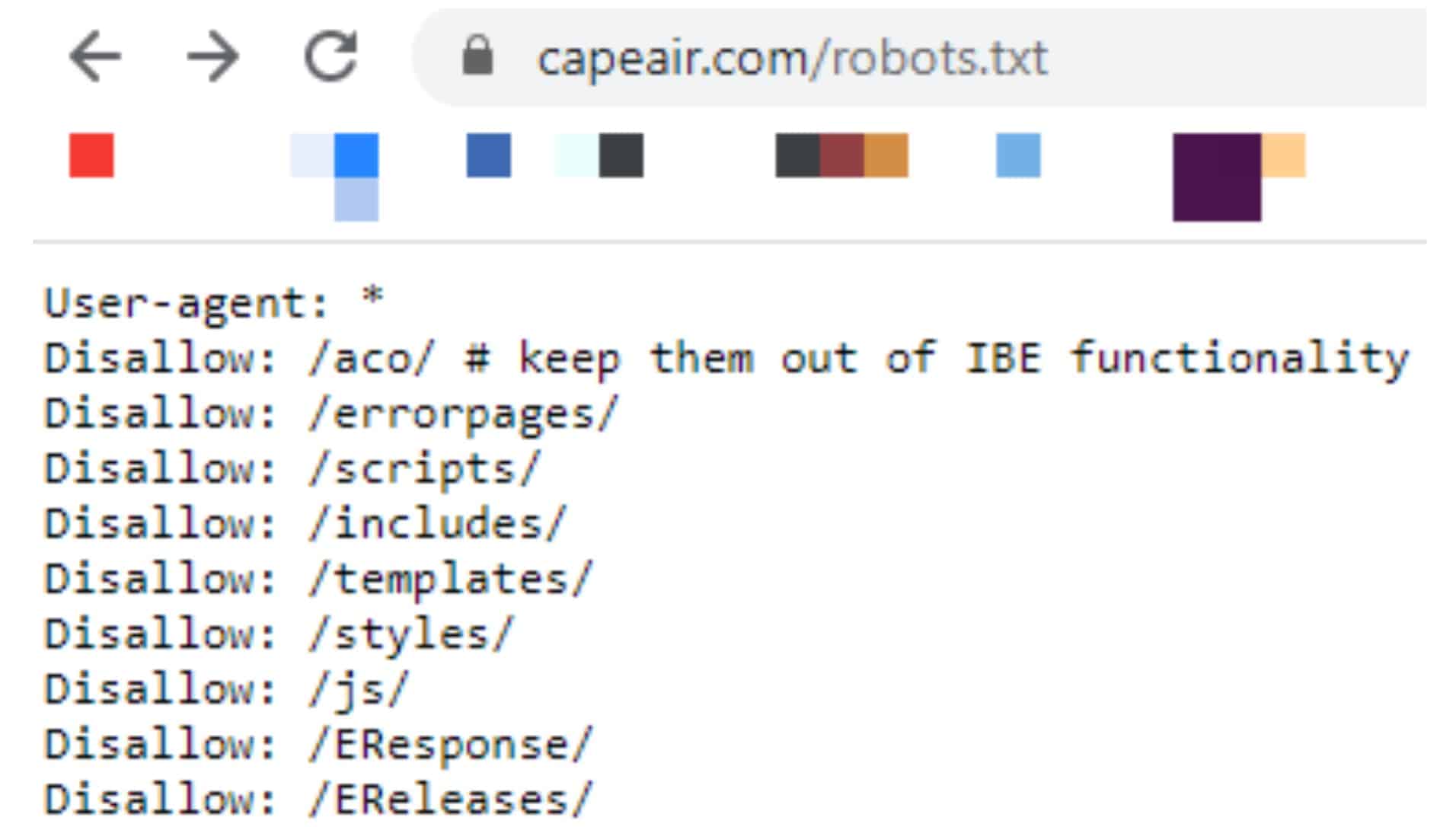

On your development machine, navigate to your Next.js project directory. Let's go over each step to get the robots.txt file added to your Next.js website. If that's the case, make sure you use that directory name in the next steps. A robots.txt file consists of one or more rules. If you're using an older version of Next.js, this directory may be named /static instead of /public. robots.txt is a plain text file that follows the Robots Exclusion Standard. So, this file will be accessible at in the browser. When you place a file inside the /public directory, it will be served at the root URL of your website. User-agent: bot name Disallow: /path to file or folder/ Disallow: /path to file or folder/ Disallow: /path to file. To add a robots.txt file to your Next.js website, all you need to do is add the file to your /public directory. Having a template with up-to-date directives can help you in creating a properly formatted robots.txt file accurately, specifying the required robots and restricting access to relevant files. Web site owners use the /robots.txt file to give instructions about their site to web robots this is called The Robots Exclusion Protocol. You can read more about that standard here. So what is this robots.txt file It allows website owners to dictate which areas of the site web robots are allowed to visit, a concept called the robots. The robots.txt file is part of the Robots Exclusion Standard (RES). For an example site with a base URL of the robots.txt file will live at the URL.įor more information on the purpose of the robots.txt file, Moz has a very informative guide here. It is a plain-text file and lives at the root of your website. robots.txt is the filename used for implementing the Robots Exclusion Protocol, a standard used by websites to indicate to visiting web crawlers and other.

These instructions are determined by "allowing" or "disallowing" the behavior of individual or all user agents (i.e.

web-crawler robot software) can or can't crawl certain parts of your website. The Disallow: / part means that it applies to your entire website. The User-agent: part means that it applies to all robots. The robots.txt file indicates whether certain user agents (i.e. If you want to instruct all robots to stay away from your site, then this is the code you should put in your robots.txt to disallow all: User-agent: Disallow: /.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed